Why Tool-First Maintenance Transformations Fail

Why Tool-First Maintenance Transformations Fail

The pattern is familiar enough to be predictable. A facilities team identifies a problem—reactive maintenance eating the budget, no visibility into work order volume, PM schedules living on paper or in someone's head—and the solution seems obvious: buy a CMMS. The RFP goes out. Demos happen. A vendor is selected. The system goes live.

Twelve months later, adoption is stalling. Half the team is still texting work requests. The PM schedules that looked great in the demo aren't getting completed. The data in the system is too incomplete to generate the reports leadership was promised. The facilities director is fielding questions about why the CMMS isn't delivering the ROI that justified the purchase.

Industry estimates suggest that 60–80% of CMMS implementations underperform or stall entirely. The standard explanation is poor training, lack of executive buy-in, or insufficient change management. These factors are real. But they're not the root cause. The root cause is architectural: the organization started with the tool and skipped the operating system. Understanding why CMMS fails starts here.

The Tool-First Trap

Tool-first thinking is the assumption that software creates process. It doesn't. Software amplifies existing process—and if your existing process is chaotic, undefined, or inconsistent, the software will amplify exactly that.

Here's what tool-first looks like in practice: The RFP goes out before workflows are mapped. Nobody has documented how a work request should flow from submission to completion. Nobody has agreed on asset naming conventions. Nobody has defined what a "closed" work order actually means. But the procurement timeline is set, the demos are scheduled, and the decision needs to happen by end of quarter.

The CMMS gets selected based on feature lists and pricing. It gets configured by IT or an implementation consultant who has never walked your buildings. Training is a one-day event where technicians learn which buttons to press but not why the process matters. The system goes live, and within weeks the workarounds start: texts instead of work orders, personal spreadsheets instead of the asset register, sticky notes instead of PM completion logs.

Contrast this with process-first thinking. Before selecting any tool, you understand your current state: what maintenance maturity stage are you at? You document your workflows: how should work enter, get prioritized, get assigned, and get closed? You clean your data: are your assets named consistently, located accurately, and rated by criticality? You align your team: do the people who will use this system every day understand why it matters and how it fits into their work?

Process-first organizations don't have better luck with CMMS implementations. They have better foundations.

What Actually Breaks When You Start with the Tool

The failures that follow a tool-first implementation are specific and predictable. Understanding them helps explain why CMMS implementation failure is so common—and why the standard fixes (more training, better change management) often don't work.

The system doesn't match the workflow. The CMMS was configured with a six-field work order form that requires asset selection, priority level, building, floor, category, and description. Your team needs to report a leaking pipe from a phone in 30 seconds. The form takes four minutes. The text to the maintenance lead takes 10 seconds. The text wins—and the work order never gets created. The system is technically capable. It's just not matched to how work actually happens.

Data goes in dirty and stays dirty. Without a clean asset register and agreed naming conventions established before launch, the database becomes unusable within months. The same HVAC unit appears as "RTU-1," "Rooftop Unit Gym," and "old unit on the gym roof" in three different work orders. Location data is inconsistent. Criticality was never defined. When a facilities director tries to pull a report on HVAC maintenance costs, the data is too fragmented to mean anything.

PM schedules are aspirational, not realistic. The implementation team loaded manufacturer-recommended PM schedules for every asset in the system. On paper, the preventive maintenance program looks comprehensive. In practice, the schedule requires 40 hours of PM labor per week and the team has 15 hours of available capacity after reactive work. Completion rates collapse to 20–30%. The PM program exists in the CMMS but not in the buildings.

Adoption is mandated but not supported. Management requires CMMS use but hasn't invested in making it easier than the old way. The system is a burden, not a benefit. Shadow systems—group texts, shared spreadsheets, paper logs—emerge because they solve real problems faster than the official tool. The CMMS becomes a compliance exercise: data gets entered because it's required, not because it's useful.

What to Build Before You Buy (Or Re-Build After a Failed Rollout)

The five foundations below aren't optional. They're the prerequisites that determine whether a CMMS implementation succeeds or stalls. If you're pre-purchase, build them first. If you're post-implementation and struggling, go back and build them now. It's not too late—but the longer you wait, the harder the rebuild.

Foundation 1 — A Clear Picture of Your Current State

What maintenance maturity stage is your organization actually at? Not where you want to be—where you are. What's your ratio of reactive to planned work? How many assets do you manage, and how are they documented today? What percentage of maintenance requests enter through the official system versus through texts, emails, and hallway conversations?

This honest assessment tells you what the CMMS needs to support right now—not in an ideal future state. A Stage 1 organization (fully reactive, no consistent tracking) needs a CMMS configured for simple work order intake and basic reporting. Loading it with advanced PM scheduling and asset condition tracking on day one is building features for a team that isn't ready to use them.

Foundation 2 — Defined Processes That Exist Independent of Software

How should a work request flow from submission to completion? Who triages? What determines priority? What does a "complete" work order look like—what information must be captured at closure? How are PMs assigned, and who is accountable for completion?

These decisions should be made by people, documented on paper or a whiteboard, and agreed upon by the team before any CMMS configuration begins. If the process is undefined, the software will impose its own default workflow—and that default was designed for a generic organization, not yours.

Foundation 3 — A Clean Asset Register

Standardized names. Accurate locations. Basic specifications: manufacturer, model, installation date or approximate age. A criticality rating—even a simple high/medium/low—that reflects each asset's operational importance.

This is the data layer. If asset data is wrong or incomplete, every report generated from it is unreliable. Every PM schedule assigned to it is misrouted. Every cost analysis based on it is inaccurate. Clean asset data before launch isn't a nice-to-have. It's the difference between a CMMS that generates insight and one that generates noise.

Foundation 4 — Staff Alignment and Buy-In

The people who will use the system every day—technicians, custodians, maintenance coordinators—need to believe it's better than what they were doing before. This belief doesn't come from a training session. It comes from involving them in the selection and configuration process.

Ask your team what frustrates them about the current process. Show them how the CMMS addresses those specific frustrations. Let them test the mobile experience and provide feedback before launch. When technicians see their input reflected in the system, adoption shifts from compliance to ownership.

Foundation 5 — Realistic Expectations About the Timeline

A maintenance transformation is 12–18 months of sustained effort, not a six-week software implementation. The CMMS goes live in weeks. Building the habits, data quality, and process discipline that make it valuable takes quarters.

Set leadership expectations accordingly. Month 1 is about getting work into the system consistently. Month 3 is about data quality and completion standards. Month 6 is about launching PM on your most critical assets. Month 12 is about using the data to make capital planning decisions. Each milestone builds on the last. Promising "full ROI" at 90 days creates a deadline that the team can't meet—and leadership will hold against them.

How to Recover from a Failed CMMS Rollout

If you're reading this post-implementation and recognizing the patterns described above, you're not alone. A significant percentage of facilities teams are in this position: they have a CMMS, it's partially adopted, the data is incomplete, and they're not getting the value they expected.

The recovery path is the same as the prevention path—you just start from where you are instead of where you wish you were.

First, stop blaming adoption and start auditing the system. Is the work order submission process actually easier than the workarounds your team is using? Is the asset register accurate? Are PM schedules realistic given your team's capacity? If the answer to any of these is no, fix the system before demanding more from the people using it.

Second, find and migrate the shadow systems. The spreadsheets, texts, and paper logs your team is using contain real maintenance data. Import it. This closes data gaps and shows your team that their work outside the system wasn't wasted.

Third, simplify before you expand. If the CMMS is configured with features your team isn't using, strip it back to essentials: work order intake, assignment, completion, and basic reporting. Get adoption right on the fundamentals before adding PM scheduling, asset management, and advanced analytics.

Fourth, re-engage your team. The technicians who gave up on the system six months ago did so for reasons. Ask what those reasons were. Fix them. Then invite the team back—not with a mandate, but with a system that's actually better than their workaround.

Frequently Asked Questions

Why do most CMMS implementations fail?

Most CMMS implementations fail because organizations start with the tool instead of the process. Without clean asset data, defined workflows, and staff buy-in established before launch, the system amplifies existing chaos rather than organizing it. The tool becomes a data entry burden rather than an operational engine, and teams revert to the workarounds that feel easier.

What should I do before implementing a CMMS?

Before implementing a CMMS, audit your current maintenance processes, build a clean asset register with standardized names and criticality ratings, define your work order workflow from intake to closure, and get buy-in from the maintenance team who will use the system daily. These foundations determine whether the implementation succeeds or stalls.

Can a failed CMMS implementation be saved?

Yes, but it requires going back to build the foundations that were skipped. That means cleaning up the asset database, simplifying the work order process to close usability gaps, migrating shadow system data into the CMMS, and re-engaging the team with a system that's been fixed to match how they actually work. It's a reset, not a restart—the system stays, but the approach changes.

How is a maintenance operating system different from a CMMS?

A CMMS is a tool—software that tracks work orders, assets, and PM schedules. A maintenance operating system is the full framework of processes, data standards, team practices, and technology that determines how maintenance is managed across an organization. A CMMS is one component of the operating system, not a substitute for it. Organizations that treat a CMMS as the entire solution are the ones most likely to see implementations stall.

The Tool Is a Layer, Not the Foundation

The facilities management industry has been sold a story for decades: buy the right tool and your maintenance problems will be solved. Vendors have every incentive to reinforce this narrative. The RFP process reinforces it. The conference demo circuit reinforces it.

But the organizations that actually transform their maintenance operations don't start with a tool. They start with an honest assessment of where they are. They define processes before configuring software. They clean their data before generating reports. They earn their team's buy-in before mandating system use.

The CMMS matters. But it's a layer of the operating system—not the operating system itself. Get the foundations right, and the tool delivers. Skip them, and you'll be looking for a different tool in 18 months.

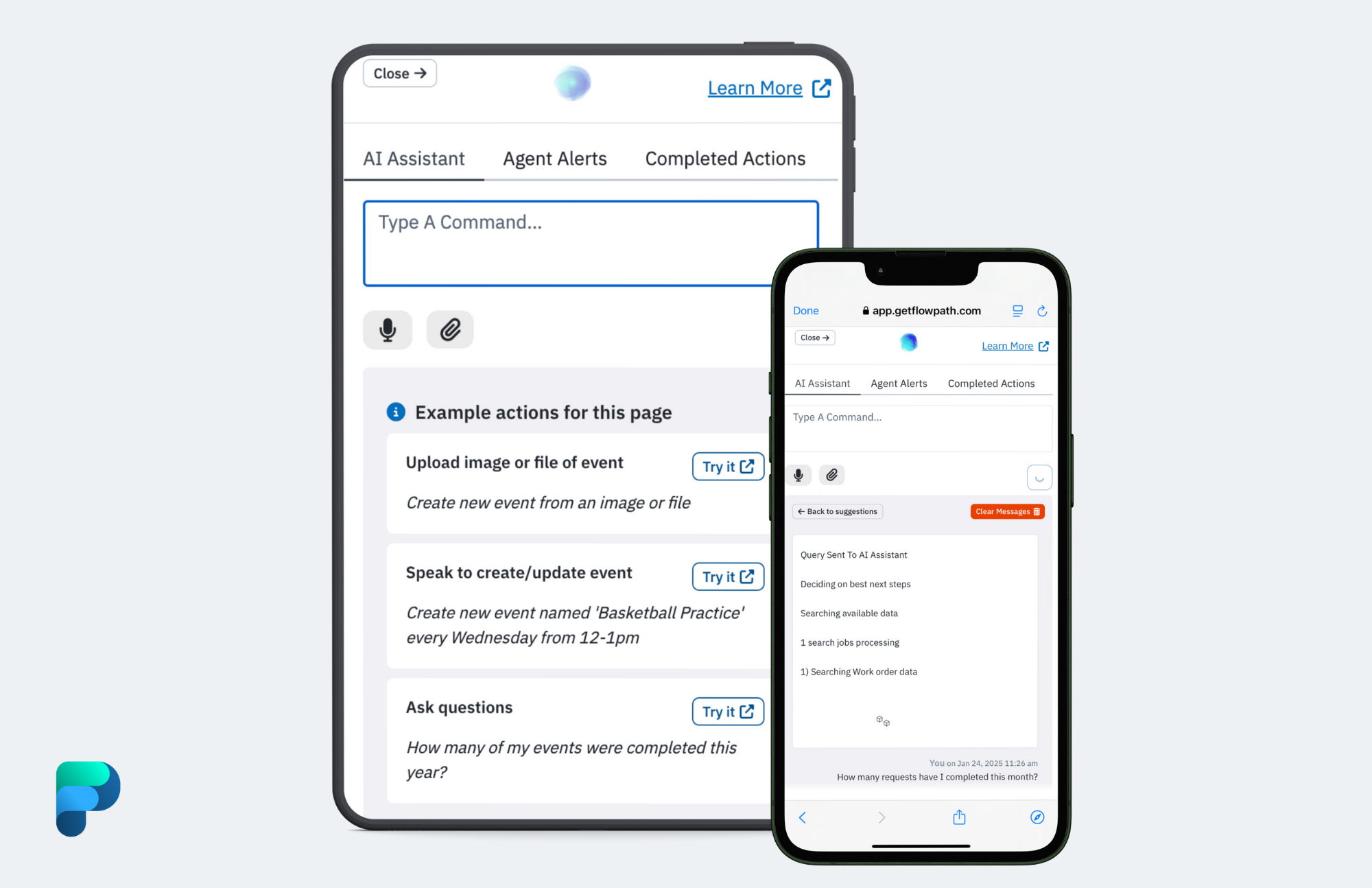

Evaluating a CMMS—or re-evaluating after a stalled rollout? Let's talk about how to build the foundation first. Request a demo of FlowPath and we'll show you what a process-first implementation looks like.